moo

Welcome to the moo documentation. moo is a small, self-contained AI agent written in C — plus the foundation libraries it rides on. It ships as a terminal app (moo) that talks to any OpenAI-compatible endpoint (Kimi, GLM, DeepSeek, OpenAI itself, …); an Anthropic-compatible provider is on the roadmap. Runs on macOS and Linux; Windows is on the roadmap but not a near-term priority.

- Designed and reviewed by @mivinci

- Coded by CodeBuddy (VSCode plugin) with claude-opus-4.7 and GLM-5.1

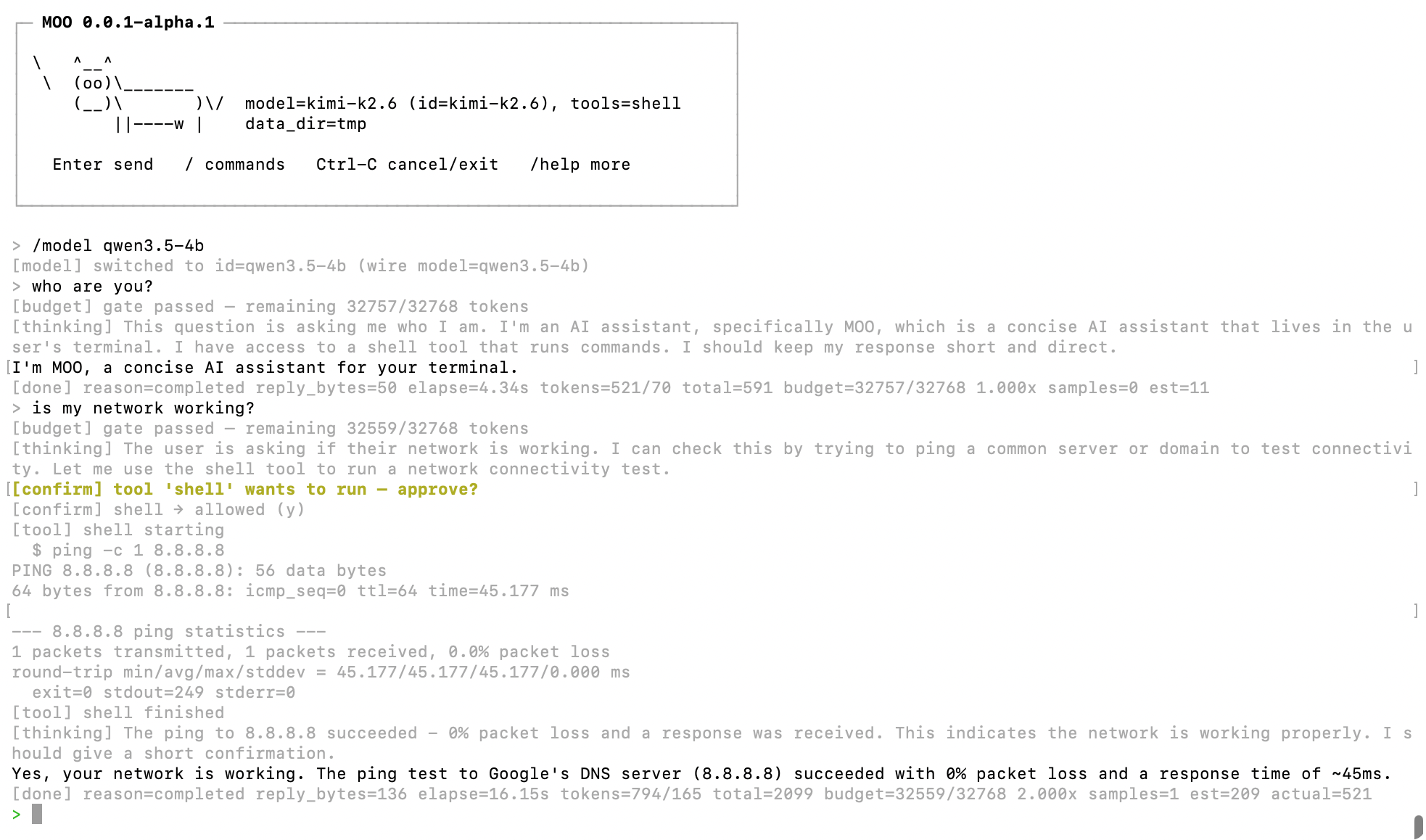

Here's what a session looks like:

Architecture Overview

moo is layered. The agent core (xagent) sits on top of a set of reusable C libraries that together form the runtime: an event loop, buffers, networking, HTTP, logging, a line editor, and more. Each lower-level lib is independently usable in your own project.

graph TD

subgraph "App Layer"

APP["apps/cli<br/>the moo REPL"]

end

subgraph "Agent Core"

XAGENT["xagent<br/>agent / session / query /<br/>message / model / provider / tool / budget"]

end

subgraph "Foundation Libraries"

XHTTP["xhttp<br/>HTTP client & server<br/>SSE · WebSocket · TLS"]

XNET["xnet<br/>URL / DNS / TLS config / TCP"]

XBUF["xbuf<br/>Buffer Primitives"]

XLINE["xline<br/>CJK-aware line editor"]

XLOG["xlog<br/>Async Logging"]

XCRYPTO["xcrypto<br/>SHA-1 / SHA-256 / MD5 / HMAC / CRC-32"]

XJS["xjs<br/>Embeddable JS (QuickJS-ng)"]

XP2P["xp2p<br/>ICE / STUN / TURN / SCTP / DTLS"]

XFER["xfer<br/>P2P file transfer (WebRTC DataChannel)"]

XBASE["xbase<br/>Event loop · Timers · Tasks · Sockets · Memory"]

end

APP --> XAGENT

APP --> XLINE

XAGENT --> XHTTP

XAGENT --> XBASE

XAGENT --> XBUF

XHTTP --> XNET

XHTTP --> XBUF

XHTTP --> XBASE

XNET --> XBASE

XLINE --> XBASE

XLOG --> XBASE

XCRYPTO --> XBASE

XJS --> XBASE

XP2P --> XNET

XP2P --> XCRYPTO

XP2P --> XBASE

XFER --> XP2P

XFER --> XHTTP

XBUF -->|"atomic.h"| XBASE

style XAGENT fill:#e67e22,color:#fff

style APP fill:#c0392b,color:#fff

style XBASE fill:#50b86c,color:#fff

style XBUF fill:#4a90d9,color:#fff

style XNET fill:#e74c3c,color:#fff

style XHTTP fill:#f5a623,color:#fff

style XLINE fill:#1abc9c,color:#fff

style XLOG fill:#9b59b6,color:#fff

style XCRYPTO fill:#34495e,color:#fff

style XJS fill:#16a085,color:#fff

style XP2P fill:#2ecc71,color:#fff

style XFER fill:#27ae60,color:#fff

Module Index

xagent — The Agent

moo's headline module: a non-blocking, single-loop AI agent runtime. No GC, no green threads, no hidden allocations on the hot path.

| Sub-Module | Description |

|---|---|

agent.h | Long-lived persona — provider/model, system prompt, tool set, limits. Mints sessions. |

session.h | Stateful conversation — owns history, runs the tool-call loop, emits on_text / on_thinking / on_tool / on_done |

query.h | One round-trip to the model, including streaming decode and sidecar supervision |

message.h | Chat-message value type with tool-call envelopes |

model.h | Model registry — {id → provider + wire-model + limits}; powers runtime model switching |

provider.h · provider_openai.c | Backend vtable + OpenAI-compatible implementation (chat/completions, SSE). Anthropic provider planned. |

tool.h · tool_shell.h | Tool definition ABI + a built-in shell tool with confirmation hooks |

budget.h | Prompt-size estimator, rolling trimmer, self-calibrating token budgeter |

Design notes: context budget · layered memory · three-layer conversation model.

apps/cli — The moo REPL

A terminal app built on xagent + xline. Streaming output, slash commands (/help /model /tokens /cancel /bypass …), tool-call confirmation prompts, persistent history with reverse search, and model hot-swap via models.json. See the project README for the quick start.

xbase — Core Primitives

The foundation every other module sits on. Event loop, timers, tasks, async sockets, memory, lock-free structures, plus a few batteries-included utilities.

| Sub-Module | Description |

|---|---|

| event.h | Cross-platform event loop — kqueue (macOS) / epoll (Linux) / poll (fallback) |

| timer.h | Monotonic timer with Push (thread-pool) and Poll (lock-free MPSC) fire modes |

| task.h | N:M task model — lightweight tasks multiplexed onto a thread pool |

| socket.h | Async socket abstraction with idle-timeout support |

| command.h | Async subprocess execution (used by xagent's shell tool) |

| flag.h | GNU-style command-line flag parser |

| memory.h | Reference-counted allocation with vtable-driven lifecycle |

| string.h | Small-string-optimized mutable byte string |

| array.h / list.h / map.h / slab.h | Generic containers |

| error.h | Unified error codes and human-readable messages |

| heap.h | Min-heap with index tracking (used by timer subsystem) |

| mpsc.h | Lock-free multi-producer / single-consumer queue |

| atomic.h | Compiler-portable atomic operations (GCC/Clang builtins) |

| log.h | Per-thread callback-based logging with optional backtrace |

| backtrace.h | Platform-adaptive stack trace (libunwind > execinfo > stub) |

| base64.h / hex.h | Binary-to-text codecs |

time.h | Time utilities: xMonoMs() (monotonic) and xWallMs() (wall-clock) |

xbuf — Buffer Primitives

Three buffer types for different I/O patterns — linear, ring, and block-chain.

| Sub-Module | Description |

|---|---|

| buf.h | Linear auto-growing byte buffer with 2× expansion |

| ring.h | Fixed-size ring buffer with power-of-2 mask indexing |

| io.h | Reference-counted block-chain I/O buffer with zero-copy split/cut |

xnet — Networking Primitives

Shared networking utilities: URL parser, async DNS resolver, and TLS configuration types used by higher-level modules.

| Sub-Module | Description |

|---|---|

| url.h | Lightweight URL parser with zero-copy component extraction |

| dns.h | Async DNS resolution via thread-pool offload |

| tls.h | Shared TLS configuration types (client & server) |

| tcp.h | Async TCP connection, connector & listener with optional TLS |

xhttp — Async HTTP Client & Server & WebSocket

Full-featured async HTTP framework: libcurl-powered client with SSE streaming (which xagent uses to stream model responses), event-driven server with HTTP/1.1 & HTTP/2 (h2c), TLS support (OpenSSL / mbedTLS), and RFC 6455 WebSocket (server & client).

| Sub-Module | Description |

|---|---|

| client.h | Async HTTP client (GET / POST / PUT / DELETE / PATCH / HEAD) |

| sse.c | SSE streaming client with W3C-compliant event parsing |

| server.h | Event-driven HTTP server with HTTP/1.1 and HTTP/2 (h2c) |

| ws.h | RFC 6455 WebSocket server with handler-initiated upgrade |

| ws.h | RFC 6455 WebSocket client with async connect |

| transport.h | Pluggable TLS transport layer (OpenSSL / mbedTLS / plain) |

xline — CJK-Aware Line Editor

Powers the moo REPL's input: Unicode-width-aware editing, persistent history, reverse search (Ctrl-R), and redraw-while-streaming so the prompt stays put while the AI is typing above it. Docs TBD.

xlog — Async Logging

High-performance async logger with MPSC queue, three flush modes, and file rotation.

| Sub-Module | Description |

|---|---|

| logger.h | Async logger with Timer / Notify / Mixed modes and XLOG_* macros |

xjs — Embeddable JavaScript Engine

QuickJS-ng backend behind a JSC-shaped C API: ES modules, native class wrappers, stable value types.

xcrypto — Cryptographic Primitives

SHA-1, SHA-256 (OpenSSL / mbedTLS / builtin), MD5, CRC-32, and generic HMAC (HMAC-SHA1 / HMAC-SHA256 / HMAC-MD5).

xp2p — P2P Connectivity

ICE-based peer-to-peer connectivity with full STUN/TURN client stack, SDP codec, and NAT traversal. Ships with DTLS + SCTP + DataChannel for WebRTC browser interop.

| Sub-Module | Description |

|---|---|

| ice_agent.h | Full ICE agent — candidate gathering, connectivity checks, nomination, data transport |

| peer_connection.h | High-level peer connection (DTLS + SCTP + DataChannel) |

stun_msg.h / stun_attr.h / stun_txn.h | STUN message / attribute / transaction (RFC 5389) |

turn_client.h | TURN allocation, permissions, channel bindings (RFC 5766) |

sdp.h | SDP offer/answer encoding and decoding (RFC 4566) |

xfer — P2P File Transfer

Zero-config send/receive over WebRTC DataChannel — signaling, chunking, SHA-1 verification, resume support.

bench — End-to-End Benchmarks

End-to-end benchmark results comparing moo's foundation libs against other frameworks. These numbers are what makes the agent loop feel free — they're not the agent's numbers themselves.

| Benchmark | Description |

|---|---|

| HTTP/1.1 Server | moo single-threaded HTTP/1.1 server vs Go net/http — GET/POST throughput and latency |

| HTTP/2 Server | moo single-threaded HTTP/2 (h2c) server vs Go net/http h2c — GET/POST throughput and latency |

| HTTPS Server | moo single-threaded HTTPS (TLS 1.3) server vs Go net/http — GET/POST throughput and latency |

Quick Navigation Guide

By Use Case

| I want to... | Start here |

|---|---|

Run the moo agent | Project README — Quick Start |

| Embed the agent in my own app | libs/xagent/agent.h + session.h (docs TBD) |

| Add a tool to the agent | libs/xagent/tool.h (shell tool as reference: tool_shell.h) |

| Plug in a new LLM provider | libs/xagent/provider.h + provider_openai.c as reference |

| Understand context budgeting | design/context_budget.md |

| Understand layered memory | design/layered_memory.md |

| Build an event-driven server | xbase/event.h → xbase/socket.h |

| Schedule timers | xbase/timer.h |

| Run tasks on a thread pool | xbase/task.h |

| Spawn subprocesses | xbase/command.h |

| Parse command-line flags | xbase/flag.h |

| Make async HTTP requests | xhttp/client.h |

| Stream LLM API responses (SSE) | xhttp/sse.c |

| Build an HTTP server | xhttp/server.h |

| Add WebSocket server / client | xhttp/ws.h · ws_client |

| Parse a URL · resolve DNS · make TCP / TLS connections | xnet |

| Add async logging | xlog/logger.h |

| Manage object lifecycles | xbase/memory.h |

| Choose the right buffer type | xbuf overview |

| Build a lock-free producer/consumer pipeline | xbase/mpsc.h |

| Embed JavaScript | xjs overview |

| Hash / HMAC / CRC | xcrypto overview |

| Establish P2P connectivity | xp2p/ice_agent.h · peer_connection.h |

| P2P file transfer | xfer overview |

| See micro-benchmark results | Each module doc has a Benchmark section (e.g. mpsc.h) |

| See HTTP server benchmarks | HTTP/1.1 · HTTP/2 · HTTPS |

By Dependency Level (foundation libs)

Level 0 (no deps) : atomic.h, error.h, time.h

Level 1 (atomic only) : heap.h, mpsc.h

Level 2 (Level 0-1) : memory.h, log.h, backtrace.h, buf.h, ring.h

Level 3 (Level 0-2) : event.h, io.h, url.h, tls.h

Level 4 (event loop) : timer.h, task.h, socket.h, command.h, dns.h, tcp.h,

logger.h, client.h, server.h, ws.h

Level 5 (xbase+xnet) : ice_agent.h, stun_msg.h, turn_client.h, sdp.h

Level 6 (top) : xagent (uses xbase + xbuf + xhttp),

xfer (uses xp2p + xhttp)

Module Dependency Graph

The graph below covers the foundation layer only — xagent and xfer sit above these and use them. See the top-level Architecture Overview for the full picture.

graph BT

subgraph "Level 0"

ATOMIC["atomic.h"]

ERROR["error.h"]

TIME["time.h"]

end

subgraph "Level 1"

HEAP["heap.h"]

MPSC["mpsc.h"]

end

subgraph "Level 2"

MEMORY["memory.h"]

LOG["log.h"]

BT_["backtrace.h"]

BUF["buf.h"]

RING["ring.h"]

end

subgraph "Level 3"

EVENT["event.h"]

IO["io.h"]

URL["url.h"]

TLS_CONF["tls.h"]

end

subgraph "Level 4"

TIMER["timer.h"]

TASK["task.h"]

SOCKET["socket.h"]

COMMAND["command.h"]

DNS["dns.h"]

TCP["tcp.h"]

LOGGER["logger.h"]

CLIENT["client.h"]

SERVER["server.h"]

WS["ws.h"]

end

subgraph "Level 5"

ICE_AGENT["ice_agent.h"]

STUN_MSG["stun_msg.h"]

TURN_CLIENT["turn_client.h"]

SDP_["sdp.h"]

end

HEAP --> ATOMIC

MPSC --> ATOMIC

MEMORY --> ERROR

LOG --> BT_

IO --> ATOMIC

IO --> BUF

EVENT --> HEAP

EVENT --> MPSC

EVENT --> TIME

TIMER --> EVENT

TASK --> EVENT

SOCKET --> EVENT

COMMAND --> EVENT

DNS --> EVENT

TCP --> EVENT

TCP --> DNS

TCP --> SOCKET

TCP --> TLS_CONF

LOGGER --> EVENT

LOGGER --> MPSC

LOGGER --> LOG

CLIENT --> EVENT

CLIENT --> BUF

CLIENT --> URL

CLIENT --> DNS

CLIENT --> TLS_CONF

SERVER --> SOCKET

SERVER --> BUF

SERVER --> TLS_CONF

WS --> SERVER

WS --> URL

ICE_AGENT --> EVENT

ICE_AGENT --> SOCKET

ICE_AGENT --> STUN_MSG

ICE_AGENT --> TURN_CLIENT

ICE_AGENT --> SDP_

STUN_MSG --> MEMORY

TURN_CLIENT --> STUN_MSG

SDP_ --> MEMORY

style EVENT fill:#50b86c,color:#fff

style URL fill:#e74c3c,color:#fff

style DNS fill:#e74c3c,color:#fff

style TCP fill:#e74c3c,color:#fff

style TLS_CONF fill:#e74c3c,color:#fff

style CLIENT fill:#f5a623,color:#fff

style SERVER fill:#f5a623,color:#fff

style WS fill:#f5a623,color:#fff

style LOGGER fill:#9b59b6,color:#fff

style ICE_AGENT fill:#2ecc71,color:#fff

style STUN_MSG fill:#2ecc71,color:#fff

style TURN_CLIENT fill:#2ecc71,color:#fff

style SDP_ fill:#2ecc71,color:#fff

Build & Test

# Build libraries + tests (Debug)

cmake -S . -B build -DCMAKE_BUILD_TYPE=Debug

cmake --build build --parallel

# Build the moo CLI (apps/ is OFF by default)

cmake -S . -B build -DCMAKE_BUILD_TYPE=Release \

-DMOO_BUILD_APPS=ON -DMOO_BUILD_TESTS=OFF -DMOO_BUILD_BENCHMARKS=OFF

cmake --build build --parallel

# Run tests

ctest --test-dir build --output-on-failure --parallel 4

See the project README for full build instructions, the complete option table, TLS backend selection, prerequisites, and container-based Linux testing.

Benchmark

Micro-benchmark results are included in each module's documentation page (see the Benchmark section at the bottom of each page, e.g. mpsc.h, buf.h).

End-to-end benchmarks:

| Benchmark | Description |

|---|---|

| HTTP/1.1 Server | moo vs Go net/http — 152K req/s single-threaded, +15~60% faster across all scenarios |

| HTTP/2 Server | moo vs Go h2c — single-threaded HTTP/2 (h2c) throughput comparison |

| HTTPS Server | moo vs Go HTTPS — single-threaded TLS 1.3 throughput comparison |

License

MIT © 2025-present @mivinci and moo contributors

Libraries

moo is organized into nine libraries, layered from low-level core primitives up to high-level async networking, P2P connectivity, file transfer, and an embeddable JavaScript engine.

┌─────────────────────────────────────────────┐

│ Application Layer │

├──────────────────────┬──────────────────────┤

│ xfer │ xjs │

│ P2P File Transfer │ JS Scripting (QJS) │

├──────────────────────┼──────────────────────┤

│ xhttp │ xlog │

│ HTTP Client/Server │ Async Logging │

│ WebSocket │ │

├──────────────────────┼──────────────────────┤

│ xp2p │ │

│ ICE / STUN / TURN │ │

├──────────────────────┴──────────────────────┤

│ xnet — URL / DNS / TCP / TLS Config │

├─────────────────────────────────────────────┤

│ xbuf — Linear / Ring / Block-Chain Buffer │

├──────────────────────┬──────────────────────┤

│ xbase │ xcrypto │

│ Event Loop / Timer │ SHA-1/256 MD5 CRC │

│ Task / Memory │ HMAC / Crypto │

└──────────────────────┴──────────────────────┘

Overview

| Library | Description |

|---|---|

| xbase | Core primitives — event loop, timers, tasks, async sockets, memory, lock-free data structures |

| xbuf | Buffer primitives — linear, ring, and block-chain I/O buffers |

| xnet | Networking primitives — URL parser, async DNS resolver, TCP, shared TLS configuration types |

| xhttp | Async HTTP client & server — libcurl multi-socket client with SSE streaming, HTTP/1.1 & HTTP/2 async server with TLS, WebSocket server & client |

| xlog | Async logging — MPSC queue, timer/pipe flush, log rotation |

| xjs | Embeddable JavaScript engine — QuickJS-ng backend, JSC-shaped C API, ES modules, native class wrappers |

| xcrypto | Cryptographic primitives — SHA-1, SHA-256 (OpenSSL / mbedTLS / builtin), MD5, CRC-32, generic HMAC with HMAC-SHA1, HMAC-SHA256, HMAC-MD5 |

| xp2p | P2P connectivity — ICE agent, STUN/TURN client, SDP codec, NAT traversal |

| xfer | P2P file transfer — chunked transfer over WebRTC DataChannel with signaling, resume, and SHA-1 integrity |

Dependency Order

Level 0 (no deps) : atomic.h, error.h, time.h

Level 1 (atomic only) : heap.h, mpsc.h

Level 2 (Level 0-1) : memory.h, log.h, backtrace.h, buf.h, ring.h

Level 3 (Level 0-2) : event.h, io.h, url.h, tls.h

Level 4 (event loop) : timer.h, task.h, socket.h, dns.h, tcp.h, logger.h, client.h, server.h, ws.h

Level 5 (xbase+xnet) : ice_agent.h, stun_msg.h, stun_attr.h, stun_txn.h, turn_client.h, sdp.h

Level 6 (xp2p+xhttp) : xfer.h, xfer_signal.h, xfer_protocol.h

Level ∞ (standalone) : sha1.h, sha256.h, md5.h, crc32.h, hmac.h (xcrypto — depends only on xbase error codes)

Level ∞ (standalone) : js.h (xjs — depends only on xbase; pulls QuickJS-ng privately)

xbase — Event-Driven Async Foundation

Introduction

xbase is the foundational module of moo, providing the core primitives for building event-driven, asynchronous C applications on macOS and Linux. It delivers a cross-platform event loop, monotonic timers, an N:M task model (thread pool), async sockets, reference-counted memory management, lock-free data structures, and essential utilities — all in a minimal, zero-dependency C99 package.

xbase is designed to be the "kernel" that higher-level moo modules (xbuf, xhttp, xlog) build upon. Every I/O-bound or timer-driven feature in moo ultimately relies on xbase's event loop and concurrency primitives.

Design Philosophy

-

Edge-Triggered by Default — The event loop operates in edge-triggered mode across all backends (kqueue, epoll, poll), encouraging callers to drain file descriptors completely. This yields higher throughput and fewer spurious wakeups compared to level-triggered designs.

-

Layered Abstraction — Low-level primitives (atomic, mpsc, heap) are composed into mid-level services (timer, task) which are then integrated into the high-level event loop. Each layer is independently usable.

-

Zero Allocation in the Hot Path — Data structures like the MPSC queue and min-heap are designed to avoid dynamic allocation during normal operation. Memory is pre-allocated or embedded in user structs.

-

Thread-Safety Where It Matters — APIs that are expected to be called cross-thread (e.g.,

xEventWake,xTimerSubmitAfter,xMpscPush) are explicitly designed to be thread-safe. Single-threaded APIs are documented as such. -

vtable-Driven Lifecycle — The memory module uses a virtual table pattern (ctor/dtor/retain/release) to provide reference-counted object management in pure C, inspired by Objective-C's retain/release model.

-

Platform Adaptation at Build Time — Platform-specific code (kqueue vs. epoll, libunwind vs. execinfo) is selected via compile-time macros, keeping runtime overhead at zero.

Architecture

graph TD

subgraph "High-Level Services"

EVENT["event.h<br/>Event Loop"]

TIMER["timer.h<br/>Monotonic Timer"]

TASK["task.h<br/>N:M Task Model"]

SOCKET["socket.h<br/>Async Socket"]

CMD["cmd.h<br/>Command Executor"]

end

subgraph "Infrastructure"

MEMORY["memory.h<br/>Ref-Counted Memory"]

SLAB["slab.h<br/>Slab Object Pool"]

LOG["log.h<br/>Thread-Local Log"]

BACKTRACE["backtrace.h<br/>Stack Backtrace"]

ERROR["error.h<br/>Error Codes"]

TIME["time.h<br/>Time Utilities"]

end

subgraph "Data Structures & Concurrency"

HEAP["heap.h<br/>Min-Heap"]

MAP["map.h<br/>Generic Map"]

LIST["list.h<br/>Doubly-Linked List"]

ARRAY["array.h<br/>Dynamic Array"]

MPSC["mpsc.h<br/>Lock-Free MPSC Queue"]

ATOMIC["atomic.h<br/>Atomic Operations"]

end

EVENT -->|"registers timers"| TIMER

EVENT -->|"offloads work"| TASK

EVENT -->|"wraps fd"| SOCKET

EVENT -->|"SIGCHLD + I/O watch"| CMD

SOCKET -->|"monitors I/O"| EVENT

SOCKET -->|"idle timeout"| EVENT

TIMER -->|"schedules entries"| HEAP

TIMER -->|"poll-mode queue"| MPSC

TIMER -->|"push-mode dispatch"| TASK

TIMER -->|"reads clock"| TIME

MPSC -->|"CAS operations"| ATOMIC

MEMORY -->|"atomic refcount"| ATOMIC

SLAB -->|"intrusive freelist"| ATOMIC

TIMER -->|"entry allocation"| SLAB

TASK -->|"task allocation"| SLAB

MAP -->|"node allocation"| SLAB

LOG -->|"fatal backtrace"| BACKTRACE

LOG -->|"error formatting"| ERROR

EVENT -->|"reads clock"| TIME

style EVENT fill:#4a90d9,color:#fff

style TIMER fill:#4a90d9,color:#fff

style TASK fill:#4a90d9,color:#fff

style SOCKET fill:#4a90d9,color:#fff

style CMD fill:#4a90d9,color:#fff

style MEMORY fill:#50b86c,color:#fff

style SLAB fill:#50b86c,color:#fff

style LOG fill:#50b86c,color:#fff

style BACKTRACE fill:#50b86c,color:#fff

style ERROR fill:#50b86c,color:#fff

style TIME fill:#50b86c,color:#fff

style HEAP fill:#f5a623,color:#fff

style MAP fill:#f5a623,color:#fff

style LIST fill:#f5a623,color:#fff

style ARRAY fill:#f5a623,color:#fff

style MPSC fill:#f5a623,color:#fff

style ATOMIC fill:#f5a623,color:#fff

Sub-Module Overview

| Header | Document | Description |

|---|---|---|

event.h | event.md | Cross-platform event loop (edge-triggered) — kqueue / epoll / poll backends with built-in timer and thread-pool integration |

timer.h | timer.md | Monotonic timer with push (thread-pool) and poll (lock-free MPSC) fire modes |

task.h | task.md | N:M task model — lightweight tasks multiplexed onto a configurable thread pool |

socket.h | socket.md | Async socket abstraction with idle-timeout support over xEventLoop |

memory.h | memory.md | Reference-counted allocation with vtable-driven lifecycle (ctor/dtor/retain/release) |

slab.h | slab.md | Fixed-size object pool — single-threaded xSlab and thread-safe xSlabMt variants for high-frequency small allocations |

log.h | log.md | Per-thread callback-based logging with optional backtrace on fatal |

backtrace.h | backtrace.md | Platform-adaptive stack trace capture (libunwind > execinfo > stub) |

error.h | error.md | Unified error codes (xErrno) and human-readable messages |

heap.h | heap.md | Generic min-heap with O(log n) insert/remove, used internally by the timer subsystem |

map.h | map.md | Generic key-value map with three backends: hash table, flat table, and red-black tree |

mpsc.h | mpsc.md | Lock-free multi-producer / single-consumer intrusive queue |

atomic.h | atomic.md | Compiler-portable atomic operations (GCC/Clang __atomic builtins) |

io.h | io.md | Abstract I/O interfaces (Reader, Writer, Seeker, Closer) with convenience helpers (xReadFull, xReadAll, xWritev, etc.) |

list.h | list.md | Intrusive doubly-linked circular list — zero-allocation, inline implementation derived from Linux kernel's list.h |

array.h | array.md | Generic auto-growing array — type-erased contiguous storage with optional lifecycle callbacks (retain/release/equal) |

hex.h | hex.md | Hex (base16) encode/decode — binary to/from ASCII hex string (lower-case output, case-insensitive decode) |

base64.h | base64.md | Base64 encode/decode (RFC 4648) — standard and URL-safe alphabets, with or without = padding |

time.h | — | Time utilities: xMonoMs() (monotonic) and xWallMs() (wall-clock) in milliseconds |

cmd.h | cmd.md | Async command executor over xEventLoop — spawn child processes with stdout/stderr capture, streaming, discard, and PTY modes |

flag.h | flag.md | POSIX/GNU-style command-line flag parser — typed storage, auto-generated --help, choice validation, counter and positional support |

How to Choose

| I need to… | Use |

|---|---|

| React to I/O readiness on file descriptors | event.h — register fds and get edge-triggered callbacks |

| Schedule delayed or periodic work | timer.h — standalone timer, or use xEventLoopTimerAfter() for event-loop-integrated timers |

| Run CPU-bound work off the main thread | task.h — submit to a thread pool, optionally collect results |

| Post a callback to the event loop from another thread | event.h — xEventLoopPost() for zero-overhead cross-thread dispatch |

| Manage non-blocking TCP/UDP connections | socket.h — wraps socket + event loop + idle timeout |

| Allocate objects with automatic cleanup | memory.h — XMALLOC(T) + xRetain/xRelease |

| Pool many small fixed-size objects with minimal overhead | slab.h — xSlab (ST) / xSlabMt (MT) object pool with intrusive freelist |

| Report errors from library internals | log.h — thread-local callback, or stderr fallback |

| Capture a stack trace for debugging | backtrace.h — xBacktrace() fills a buffer |

| Handle error codes uniformly | error.h — xErrno enum + xstrerror() |

| Build a priority queue | heap.h — generic min-heap with index tracking |

| Store key-value pairs with O(1) or O(log n) access | map.h — generic map with hash, flat, and tree backends |

| Chain elements in an intrusive doubly-linked list | list.h — zero-allocation circular list with xContainerOf entry access |

| Store a growable list of fixed-size elements with automatic cleanup | array.h — xArray with optional retain/release callbacks for per-element resource management |

| Pass messages between threads lock-free | mpsc.h — intrusive MPSC queue |

| Perform atomic read-modify-write | atomic.h — macro wrappers over compiler builtins |

| Get current time in milliseconds | time.h — xMonoMs() for elapsed time, xWallMs() for wall-clock |

| Read/write through abstract I/O interfaces | io.h — xReader / xWriter + helpers like xReadFull, xReadAll |

| Submit a shell command asynchronously | cmd.h — xCommandExecutorSubmit() with capture, stream, or discard output modes |

| Parse command-line arguments | flag.h — xFlagAddString / Int / Bool / Choice / Counter / Positional + xFlagParse with auto-generated --help |

Quick Start

A minimal example that creates an event loop, schedules a one-shot timer, and runs until the timer fires:

#include <stdio.h>

#include <xbase/event.h>

static void on_timer(void *arg) {

printf("Timer fired!\n");

xEventLoopStop((xEventLoop)arg);

}

int main(void) {

// Create an event loop

xEventLoop loop = xEventLoopCreate();

if (!loop) return 1;

// Schedule a timer to fire after 1 second

xEventLoopTimerAfter(loop, on_timer, loop, 1000);

// Run the event loop (blocks until xEventLoopStop is called)

xEventLoopRun(loop);

// Clean up

xEventLoopDestroy(loop);

return 0;

}

Compile with:

gcc -o example example.c -I/path/to/moo -lxbase -lpthread

Relationship with Other Modules

graph LR

XBASE["xbase"]

XBUF["xbuf"]

XHTTP["xhttp"]

XLOG["xlog"]

XHTTP -->|"event loop + timer"| XBASE

XHTTP -->|"I/O buffers"| XBUF

XLOG -->|"event loop + MPSC queue"| XBASE

XBUF -.->|"no dependency"| XBASE

XNET["xnet"]

XNET -->|"event loop + thread pool + atomic"| XBASE

XHTTP -->|"URL + DNS + TLS config"| XNET

style XBASE fill:#4a90d9,color:#fff

style XBUF fill:#50b86c,color:#fff

style XHTTP fill:#f5a623,color:#fff

style XLOG fill:#e74c3c,color:#fff

style XNET fill:#e74c3c,color:#fff

- xbuf — Buffer module.

xIOBufferuses xbase'satomic.hfor lock-free block pool management. xhttp uses both xbase and xbuf together. - xhttp — The async HTTP client is built on top of xbase's event loop (

xEventLoop) and timer infrastructure, and uses xbuf for response buffering. - xnet — The networking primitives module. The async DNS resolver uses xbase's event loop for thread-pool offload (

xEventLoopSubmit) andatomic.hfor the cancellation flag. Cross-thread notifications (e.g., ICE/TURN completions) can usexEventLoopPost()to avoid thread-pool overhead. - xlog — The async logger uses xbase's event loop for timer-based flushing and the MPSC queue for lock-free log message passing from application threads to the logger thread.

event.h — Cross-Platform Event Loop

Introduction

event.h provides a cross-platform, edge-triggered event loop abstraction for I/O multiplexing. It unifies three OS-specific backends — kqueue (macOS/BSD), epoll (Linux), and poll (POSIX fallback) — behind a single API. The event loop is the central coordination point in xbase: it monitors file descriptors for readiness, dispatches timer callbacks, offloads CPU-bound work to thread pools, and watches for POSIX signals — all from a single thread.

Design Philosophy

-

Edge-Triggered Everywhere — All three backends operate in edge-triggered mode. kqueue uses

EV_CLEAR, epoll usesEPOLLET, and poll emulates edge-triggered behavior by clearing the event mask after each notification (requiring the caller to re-arm viaxEventMod()). This design encourages callers to drain fds completely, reducing spurious wakeups. -

Backend Selection at Compile Time — The backend is chosen via preprocessor macros (

MOO_HAS_KQUEUE,MOO_HAS_EPOLL), with poll as the universal fallback. This means zero runtime dispatch overhead. -

Integrated Timer Heap — Rather than requiring a separate timer facility, the event loop embeds a min-heap of timer entries.

xEventWait()automatically adjusts its timeout to fire the earliest timer, providing sub-millisecond timer resolution without a dedicated timer thread. -

Thread-Pool Offload —

xEventLoopSubmit()bridges the event loop and the task system: CPU-bound work runs on a worker thread, and the completion callback is dispatched on the event loop thread via a lock-free MPSC queue + cross-thread wake, ensuring single-threaded callback semantics. Offloaded work can be cancelled viaxEventLoopWorkCancel()if it hasn't started yet. -

Direct Cross-Thread Posting —

xEventLoopPost()allows any thread to queue a callback for execution on the event loop thread without involving a thread pool. This is the lightest cross-thread communication primitive — ideal for notifying the loop of external events (e.g., ICE/TURN callbacks, inter-module signals) with zero thread-pool overhead. -

Self-Pipe Trick for Signals — On epoll and poll backends, signal delivery uses the self-pipe trick (a

sigactionhandler writes to a pipe) rather thansignalfd, avoiding the fragile requirement of blocking signals in every thread. On kqueue,EVFILT_SIGNALis used natively.

Architecture

graph TD

subgraph "Event Loop (single thread)"

WAIT["xEventWait()"]

DISPATCH["Dispatch I/O callbacks"]

TIMERS["Fire expired timers"]

DONE["Drain done-queue"]

SWEEP["Sweep deleted sources"]

end

subgraph "Backend (compile-time)"

KQ["kqueue"]

EP["epoll"]

PO["poll"]

end

subgraph "Cross-Thread"

WAKE["Wake (EVFILT_USER / eventfd / pipe)"]

MPSC_Q["MPSC Done Queue"]

WORKER["Worker Thread Pool"]

POST["xEventLoopPost()"]

end

WAIT --> KQ

WAIT --> EP

WAIT --> PO

KQ --> DISPATCH

EP --> DISPATCH

PO --> DISPATCH

DISPATCH --> TIMERS

TIMERS --> DONE

DONE --> SWEEP

WORKER -->|"push result"| MPSC_Q

POST -->|"push callback"| MPSC_Q

MPSC_Q -->|"wake"| WAKE

WAKE -->|"drain"| DONE

style WAIT fill:#4a90d9,color:#fff

style DISPATCH fill:#4a90d9,color:#fff

style TIMERS fill:#f5a623,color:#fff

style DONE fill:#50b86c,color:#fff

Event Loop Lifecycle

sequenceDiagram

participant App

participant EL as xEventLoop

participant Backend as kqueue / epoll / poll

participant Timer as Timer Heap

App->>EL: xEventLoopCreate()

App->>EL: xEventAdd(fd, mask, callback)

App->>EL: xEventLoopTimerAfter(fn, 1000ms)

App->>EL: xEventLoopRun()

loop Main Loop

EL->>Timer: Check earliest deadline

Timer-->>EL: timeout = min(user_timeout, timer_deadline)

EL->>Backend: wait(timeout)

Backend-->>EL: ready events

EL->>App: callback(fd, mask)

EL->>Timer: Pop & fire expired timers

EL->>EL: Sweep deleted sources

end

App->>EL: xEventLoopStop()

App->>EL: xEventLoopDestroy()

Implementation Details

Backend Architecture

Each backend is implemented in a separate .c file that provides the full public API:

| File | Backend | Trigger Mode | Selection |

|---|---|---|---|

event_kqueue.c | kqueue | EV_CLEAR (native edge) | #ifdef MOO_HAS_KQUEUE |

event_epoll.c | epoll | EPOLLET (native edge) | #ifdef MOO_HAS_EPOLL |

event_poll.c | poll(2) | Emulated edge (mask cleared after dispatch) | Fallback |

All backends share a common base structure (struct xEventLoop_) defined in event_private.h, which contains:

- A dynamic source array with deferred deletion (sweep after dispatch)

- A cross-thread wake mechanism (

EVFILT_USERon kqueue,eventfdon epoll, pipe on poll) with atomic coalescing - A min-heap for builtin timers (protected by

timer_mumutex) - A lock-free MPSC done-queue for offload completion and posted callbacks

- Signal watch slots (up to

MOO_SIGNAL_MAX = 64)

Deferred Source Deletion

When xEventDel() is called during a callback dispatch, the source is marked deleted = 1 rather than freed immediately. After the dispatch batch completes, source_array_sweep() frees all deleted sources. This prevents use-after-free when multiple events reference the same source in a single xEventWait() call.

Cross-Thread Wake

Each backend uses the lightest available mechanism for cross-thread wakeup:

| Backend | Mechanism | Fds Used |

|---|---|---|

| kqueue | EVFILT_USER with NOTE_TRIGGER | 0 (kernel event, no fd) |

| epoll | eventfd (EFD_NONBLOCK | EFD_CLOEXEC) | 1 (wake_rfd) |

| poll | Non-blocking pipe (wake_rfd / wake_wfd) | 2 (POSIX fallback) |

xEventWake() triggers the backend-specific notification; the event loop drains it and processes the done-queue. Multiple wakes before the next xEventWait() are coalesced via an atomic wake_pending flag — only the first caller after the loop clears the flag performs the actual syscall, subsequent callers skip it entirely. This reduces wake overhead from O(N) syscalls to O(1) in batch completion scenarios.

Timer Integration

Builtin timers are stored in a min-heap inside the event loop. Before each xEventWait() call, the effective timeout is clamped to the earliest timer deadline. After I/O dispatch, expired timers are popped and fired. Timer operations (xEventLoopTimerAfter, xEventLoopTimerAt, xEventLoopTimerCancel) are thread-safe, protected by timer_mu.

Signal Handling

| Backend | Mechanism |

|---|---|

| kqueue | EVFILT_SIGNAL with EV_CLEAR — native kernel support |

| epoll | Self-pipe trick: sigaction handler writes to a per-signal pipe |

| poll | Self-pipe trick: same as epoll |

The self-pipe approach avoids signalfd's requirement to block signals in all threads, which is fragile in the presence of third-party libraries and test frameworks.

API Reference

Types

| Type | Description |

|---|---|

xEventMask | Bitmask enum: xEvent_Read (1), xEvent_Write (2), xEvent_Timeout (4) |

xEventFunc | void (*)(int fd, xEventMask mask, void *arg) — I/O callback |

xEventTimerFunc | void (*)(void *arg) — Timer callback |

xEventSignalFunc | void (*)(int signo, void *arg) — Signal callback |

xEventDoneFunc | void (*)(void *arg, void *result) — Offload completion callback |

xEventPostFunc | void (*)(void *arg) — Posted callback (via xEventLoopPost) |

xEventLoop | Opaque handle to an event loop |

xEventSource | Opaque handle to a registered event source |

xEventTimer | Opaque handle to a builtin timer |

xEventWork | Opaque handle to a submitted offload work item |

Functions

Lifecycle

| Function | Signature | Thread Safety |

|---|---|---|

xEventLoopCreate | xEventLoop xEventLoopCreate(void) | Not thread-safe |

xEventLoopCreateWithGroup | xEventLoop xEventLoopCreateWithGroup(xTaskGroup group) | Not thread-safe |

xEventLoopDestroy | void xEventLoopDestroy(xEventLoop loop) | Not thread-safe |

xEventLoopRun | void xEventLoopRun(xEventLoop loop) | Not thread-safe (call from one thread) |

xEventLoopStop | void xEventLoopStop(xEventLoop loop) | Thread-safe |

xEventLoopWait | xErrno xEventLoopWait(xEventLoop loop, int timeout_ms) | Not thread-safe (call from one thread) |

I/O Sources

| Function | Signature | Thread Safety |

|---|---|---|

xEventAdd | xEventSource xEventAdd(xEventLoop loop, int fd, xEventMask mask, xEventFunc fn, void *arg) | Not thread-safe |

xEventMod | xErrno xEventMod(xEventLoop loop, xEventSource src, xEventMask mask) | Not thread-safe |

xEventDel | xErrno xEventDel(xEventLoop loop, xEventSource src) | Not thread-safe |

xEventWait | int xEventWait(xEventLoop loop, int timeout_ms) | Not thread-safe |

Timers

| Function | Signature | Thread Safety |

|---|---|---|

xEventLoopTimerAfter | xEventTimer xEventLoopTimerAfter(xEventLoop loop, xEventTimerFunc fn, void *arg, uint64_t delay_ms) | Thread-safe |

xEventLoopTimerAt | xEventTimer xEventLoopTimerAt(xEventLoop loop, xEventTimerFunc fn, void *arg, uint64_t abs_ms) | Thread-safe |

xEventLoopTimerCancel | xErrno xEventLoopTimerCancel(xEventLoop loop, xEventTimer timer) | Thread-safe |

Cross-Thread

| Function | Signature | Thread Safety |

|---|---|---|

xEventWake | xErrno xEventWake(xEventLoop loop) | Thread-safe (signal-handler-safe) |

xEventLoopPost | xErrno xEventLoopPost(xEventLoop loop, xEventPostFunc fn, void *arg) | Thread-safe |

xEventLoopSubmit | xErrno xEventLoopSubmit(xEventLoop loop, xTaskGroup group, xTaskFunc work_fn, xEventDoneFunc done_fn, void *arg, xEventWork *out) | Thread-safe |

xEventLoopWorkCancel | xErrno xEventLoopWorkCancel(xEventLoop loop, xEventWork work) | Thread-safe |

Signal

| Function | Signature | Thread Safety |

|---|---|---|

xEventLoopSignalWatch | xErrno xEventLoopSignalWatch(xEventLoop loop, int signo, xEventSignalFunc fn, void *arg) | Not thread-safe |

Deprecated

| Function | Signature | Replacement |

|---|---|---|

xEventLoopNowMs | uint64_t xEventLoopNowMs(void) | xMonoMs() from <xbase/time.h> |

Usage Examples

Basic Event Loop with Timer

#include <stdio.h>

#include <xbase/event.h>

static void on_timer(void *arg) {

printf("Timer fired!\n");

xEventLoopStop((xEventLoop)arg);

}

int main(void) {

xEventLoop loop = xEventLoopCreate();

if (!loop) return 1;

// Fire after 500ms

xEventLoopTimerAfter(loop, on_timer, loop, 500);

xEventLoopRun(loop);

xEventLoopDestroy(loop);

return 0;

}

Monitoring a File Descriptor

#include <stdio.h>

#include <unistd.h>

#include <xbase/event.h>

static void on_readable(int fd, xEventMask mask, void *arg) {

char buf[1024];

ssize_t n;

// Edge-triggered: must drain completely

while ((n = read(fd, buf, sizeof(buf))) > 0) {

fwrite(buf, 1, (size_t)n, stdout);

}

(void)mask;

(void)arg;

}

int main(void) {

xEventLoop loop = xEventLoopCreate();

// Monitor stdin for readability

xEventAdd(loop, STDIN_FILENO, xEvent_Read, on_readable, NULL);

// Run for up to 10 seconds, then stop

xEventLoopTimerAfter(loop, (xEventTimerFunc)xEventLoopStop, loop, 10000);

xEventLoopRun(loop);

xEventLoopDestroy(loop);

return 0;

}

Bounded Wait with Timeout

#include <stdio.h>

#include <xbase/event.h>

static void on_done(void *arg) {

printf("Work complete!\n");

xEventLoopStop((xEventLoop)arg);

}

int main(void) {

xEventLoop loop = xEventLoopCreate();

xEventLoopTimerAfter(loop, on_done, loop, 500);

// Wait up to 5 seconds — returns xErrno_Ok if stopped,

// or xErrno_Timeout if the deadline expires.

xErrno rc = xEventLoopWait(loop, 5000);

if (rc == xErrno_Timeout) {

printf("Timed out!\n");

}

xEventLoopDestroy(loop);

return 0;

}

Posting a Callback to the Loop Thread

#include <stdio.h>

#include <pthread.h>

#include <xbase/event.h>

static void on_notify(void *arg) {

// Runs on the event loop thread — safe to access loop state

printf("Notified from another thread!\n");

xEventLoopStop((xEventLoop)arg);

}

static void *background_thread(void *arg) {

xEventLoop loop = (xEventLoop)arg;

// Do some work...

xEventLoopPost(loop, on_notify, loop);

return NULL;

}

int main(void) {

xEventLoop loop = xEventLoopCreate();

pthread_t th;

pthread_create(&th, NULL, background_thread, loop);

xEventLoopRun(loop);

pthread_join(th, NULL);

xEventLoopDestroy(loop);

return 0;

}

Offloading Work to a Thread Pool

#include <stdio.h>

#include <xbase/event.h>

static void *heavy_work(void *arg) {

// Runs on a worker thread

int *val = (int *)arg;

*val *= 2;

return val;

}

static void on_done(void *arg, void *result) {

// Runs on the event loop thread

int *val = (int *)result;

printf("Result: %d\n", *val);

(void)arg;

}

int main(void) {

xEventLoop loop = xEventLoopCreate();

int value = 21;

xEventLoopSubmit(loop, NULL, heavy_work, on_done, &value, NULL);

// Run briefly to process the completion

xEventLoopTimerAfter(loop, (xEventTimerFunc)xEventLoopStop, loop, 1000);

xEventLoopRun(loop);

xEventLoopDestroy(loop);

return 0;

}

Use Cases

-

Network Servers — Register listening sockets and accepted connections with the event loop. Use edge-triggered callbacks to read/write data without blocking. Combine with

xSocketfor idle-timeout support. -

Timer-Driven State Machines — Use

xEventLoopTimerAfter()to schedule state transitions, retries, or heartbeat checks. The timer is integrated into the event loop, so no separate timer thread is needed. -

Hybrid I/O + CPU Workloads — Use

xEventLoopSubmit()to offload CPU-intensive parsing or compression to a thread pool, then process results on the event loop thread where I/O state is safely accessible. UsexEventLoopWorkCancel()to cancel pending work when the associated resource is being released. -

Cross-Thread Notifications — Use

xEventLoopPost()to notify the event loop from external callbacks (e.g., ICE/TURN completions, OS notifications) without the overhead of a thread pool round-trip. The callback runs on the loop thread, so no additional synchronisation is needed.

Best Practices

- Always drain fds in edge-triggered mode. Read/write until

EAGAINin every callback. Missing data means you won't be notified again until new data arrives. - Never block in callbacks. The event loop is single-threaded; a blocking call stalls all I/O and timer processing. Offload heavy work via

xEventLoopSubmit(). - Prefer

xEventLoopPost()overxEventLoopSubmit()when no worker thread is needed. If you just need to run a callback on the loop thread from another thread,xEventLoopPost()avoids the thread-pool overhead entirely. - Use

xEventLoopRun()for the main loop. It handles timer dispatch and stop-flag checking automatically. Only usexEventWait()directly if you need custom loop logic. For tests or scenarios where you need a bounded wait, usexEventLoopWait(loop, timeout_ms)— it returnsxErrno_Okwhen stopped, orxErrno_Timeoutif the deadline expires. - Cancel offloaded work when releasing resources. If you submit work via

xEventLoopSubmit()and the associated resource (passed asarg) is about to be freed, usexEventLoopWorkCancel()to prevent use-after-free. If cancel succeeds (xErrno_Ok), the arg is safe to free immediately. If it fails (xErrno_InvalidState), the work is already running — letdone_fnhandle cleanup. - Cancel timers you no longer need. Uncancelled timers hold memory until they fire. Use

xEventLoopTimerCancel()to free them early. - Be aware of the poll backend's edge emulation. On systems without kqueue or epoll, the poll backend clears the event mask after dispatch. You must call

xEventMod()to re-arm.

Comparison with Other Libraries

| Feature | xbase event.h | libevent | libev | libuv |

|---|---|---|---|---|

| Trigger Mode | Edge-triggered only | Level (default), edge optional | Level + edge | Level-triggered |

| Backends | kqueue, epoll, poll | kqueue, epoll, poll, select, devpoll, IOCP | kqueue, epoll, poll, select, port | kqueue, epoll, poll, IOCP |

| Timer Integration | Built-in min-heap | Separate timer API | Built-in | Built-in |

| Thread Pool | Built-in (xEventLoopSubmit) | None (external) | None (external) | Built-in (uv_queue_work) |

| Signal Handling | Self-pipe / EVFILT_SIGNAL | evsignal | ev_signal | uv_signal |

| API Style | Opaque handles, C99 | Struct-based, C89 | Struct-based, C89 | Handle-based, C99 |

| Binary Size | ~15 KB | ~200 KB | ~50 KB | ~500 KB |

| Dependencies | None | None | None | None |

| Windows Support | Not yet | Yes (IOCP) | Yes (select) | Yes (IOCP) |

| Design Goal | Minimal building block | Full-featured framework | Minimal + performant | Cross-platform framework |

Key Differentiator: xbase's event loop is intentionally minimal — it provides the essential primitives (I/O, timers, signals, thread-pool offload) without buffered I/O, DNS resolution, or HTTP parsing. This makes it ideal as a foundation layer for higher-level libraries (like xhttp) rather than a standalone application framework.

Benchmark

Environment: Apple M3 Pro, 36 GB RAM, macOS 26.4, Release build (

-O2), kqueue backend. Source:xbase/event_bench.cppFull report:docs/bench/event_loop.md

Core Operations

| Benchmark | Time (ns) | CPU (ns) | Iterations |

|---|---|---|---|

BM_EventLoop_CreateDestroy | 700 | 700 | 974,157 |

BM_EventLoop_WakeLatency | 413 | 413 | 1,717,088 |

BM_EventLoop_PipeAddDel | 1,144 | 1,144 | 612,118 |

- Create/Destroy takes ~700ns — reduced from ~2.8µs after eliminating the wake pipe (no more

pipe()+ two extra fds). - Wake latency is ~413ns per wake+wait cycle via

EVFILT_USER, down from ~879ns with the old pipe mechanism — a 2.1× improvement.

libuv Baseline Comparison

| Dimension | moo | libuv | Ratio |

|---|---|---|---|

| Wake Latency | 413 ns | 417 ns | Tied (moo 1.01× faster) |

| Timer (single) | 461 ns | 1,517 ns | moo 3.3× faster |

| Timer (×1000) | 43,545 ns | 68,659 ns | moo 1.6× faster |

| Offload (single) | 3,785 ns | 3,449 ns | libuv 1.1× faster (tied) |

| Offload (×1000) | 456,426 ns | 218,513 ns | libuv 2.1× faster |

Key Observations:

- Wake latency — Now effectively tied with libuv (413ns vs 417ns) after switching to

EVFILT_USER(kqueue) /eventfd(epoll) + atomic wake coalescing. Previously 2.1× slower. - Timer — moo now wins across all batch sizes thanks to batch-pop with single lock acquisition and timer struct freelist pooling. Previously libuv was 4–5× faster at batch sizes.

- Offload round-trip — libuv remains ~2× faster at scale. The gap has narrowed at small batch sizes thanks to wake coalescing and work item pooling.

timer.h — Monotonic Timer

Introduction

timer.h provides a standalone monotonic timer that schedules callbacks to fire after a delay or at an absolute time. It supports two fire modes — Push mode (dispatch to a thread pool) and Poll mode (enqueue to a lock-free MPSC queue for caller-driven execution) — making it suitable for both multi-threaded and single-threaded architectures.

Note: For timers integrated directly into an event loop, see

xEventLoopTimerAfter()/xEventLoopTimerAt()inevent.h. The standalonetimer.his useful when you need timers without an event loop, or when you want explicit control over which thread executes the callbacks.

Design Philosophy

-

Dual Fire Modes — Push mode hands expired callbacks to a thread pool for concurrent execution; Poll mode queues them for the caller to drain synchronously. This lets latency-sensitive code (e.g., an event loop) avoid thread-switch overhead by polling, while background services can use push mode for simplicity.

-

Dedicated Timer Thread — Each

xTimerinstance spawns one background thread that sleeps on a condition variable, waking only when the earliest deadline arrives or a new entry is submitted. This avoids busy-waiting and keeps CPU usage near zero when idle. -

Min-Heap for O(log n) Scheduling — Timer entries are stored in a min-heap ordered by deadline. Insert, cancel, and fire-next are all O(log n). The heap is provided by

heap.h. -

Lock-Free Poll Queue — In poll mode, expired entries are pushed onto an intrusive MPSC queue (

mpsc.h) without holding the mutex, minimizing contention between the timer thread and the polling thread.

Architecture

sequenceDiagram

participant App

participant Timer as xTimer

participant Thread as Timer Thread

participant Heap as Min-Heap

participant Queue as MPSC Queue

App->>Timer: xTimerCreate(group)

Timer->>Thread: spawn

App->>Timer: xTimerSubmitAfter(fn, 1000ms)

Timer->>Heap: push(entry)

Timer->>Thread: signal(cond)

Thread->>Heap: peek → deadline

Note over Thread: sleep until deadline

Thread->>Heap: pop(entry)

alt Push Mode

Thread->>App: xTaskSubmit(fn)

else Poll Mode

Thread->>Queue: xMpscPush(entry)

App->>Queue: xTimerPoll()

Queue-->>App: callback(arg)

end

Implementation Details

Internal Structure

struct xTimerTask_ {

xMpsc node; // Intrusive MPSC node (poll mode)

uint64_t deadline; // Absolute expiry time (CLOCK_MONOTONIC, ms)

xTimerFunc fn; // User callback

void *arg; // User argument

size_t heap_idx; // Position in min-heap (TIMER_INVALID_IDX when not in heap)

int cancelled; // Set to 1 under mutex before removal

};

struct xTimer_ {

xHeap heap; // Min-heap ordered by deadline

xTaskGroup group; // Non-NULL → push mode; NULL → poll mode

xMpsc *mq_head; // Poll-mode MPSC queue head

xMpsc *mq_tail; // Poll-mode MPSC queue tail

pthread_t thread; // Background timer thread

pthread_mutex_t mu; // Protects heap and stopped flag

pthread_cond_t cond; // Wakes timer thread on new entry or stop

int stopped; // Shutdown flag

};

Timer Thread Loop

The background thread follows this algorithm:

- Wait — If the heap is empty, block on

pthread_cond_wait(). - Check top — Peek at the minimum-deadline entry.

- Fire or sleep — If

deadline ≤ now, pop and fire. Otherwise,pthread_cond_timedwait()until the deadline or a new signal. - Repeat until

stoppedis set.

When a new entry is submitted, pthread_cond_signal() wakes the thread so it can re-evaluate whether the new entry has an earlier deadline.

Push vs. Poll Mode

graph LR

subgraph "Push Mode (group != NULL)"

HEAP_P["Min-Heap"] -->|"pop expired"| FIRE_P["fire()"]

FIRE_P -->|"xTaskSubmit"| POOL["Thread Pool"]

POOL -->|"execute"| CB_P["callback(arg)"]

end

subgraph "Poll Mode (group == NULL)"

HEAP_Q["Min-Heap"] -->|"pop expired"| FIRE_Q["fire()"]

FIRE_Q -->|"xMpscPush"| MPSC["MPSC Queue"]

MPSC -->|"xTimerPoll()"| CB_Q["callback(arg)"]

end

style POOL fill:#4a90d9,color:#fff

style MPSC fill:#f5a623,color:#fff

Cancellation

xTimerCancel() acquires the mutex, checks if the entry is still in the heap (not already fired or cancelled), removes it via xHeapRemove(), marks it cancelled, and frees the memory. If the entry has already fired, xErrno_Cancelled is returned.

Memory Ownership

- Push mode: The timer thread transfers ownership of the

xTimerTask_to the worker thread viaxTaskSubmit(). The worker frees it after executing the callback. - Poll mode: The timer thread pushes the entry to the MPSC queue.

xTimerPoll()pops and frees each entry after executing its callback. - Cancellation: The caller frees the entry immediately.

- Destroy: Remaining heap entries and poll-queue entries are freed without firing.

API Reference

Types

| Type | Description |

|---|---|

xTimerFunc | void (*)(void *arg) — Timer callback signature |

xTimer | Opaque handle to a timer instance |

xTimerTask | Opaque handle to a submitted timer entry |

Functions

| Function | Signature | Description | Thread Safety |

|---|---|---|---|

xTimerCreate | xTimer xTimerCreate(xTaskGroup g) | Create a timer. g != NULL → push mode, g == NULL → poll mode. | Not thread-safe |

xTimerDestroy | void xTimerDestroy(xTimer t) | Stop the timer thread and free all resources. Pending entries are discarded. | Not thread-safe |

xTimerSubmitAfter | xTimerTask xTimerSubmitAfter(xTimer t, xTimerFunc fn, void *arg, uint64_t delay_ms) | Schedule a callback after a relative delay. | Thread-safe |

xTimerSubmitAt | xTimerTask xTimerSubmitAt(xTimer t, xTimerFunc fn, void *arg, uint64_t abs_ms) | Schedule a callback at an absolute monotonic time. | Thread-safe |

xTimerCancel | xErrno xTimerCancel(xTimer t, xTimerTask task) | Cancel a pending entry. Returns xErrno_Ok if cancelled, xErrno_Cancelled if already fired. | Thread-safe |

xTimerPoll | int xTimerPoll(xTimer t) | Execute all due callbacks (poll mode only). Returns count. No-op in push mode. | Not thread-safe |

xTimerNowMs | uint64_t xTimerNowMs(void) | Deprecated. Use xMonoMs() from <xbase/time.h>. | Thread-safe |

Usage Examples

Push Mode (Thread Pool Dispatch)

#include <stdio.h>

#include <xbase/timer.h>

#include <xbase/task.h>

#include <unistd.h>

static void on_timeout(void *arg) {

printf("Timer fired on worker thread! arg=%p\n", arg);

}

int main(void) {

xTaskGroup group = xTaskGroupCreate(NULL);

xTimer timer = xTimerCreate(group);

// Fire after 500ms on a worker thread

xTimerSubmitAfter(timer, on_timeout, NULL, 500);

sleep(1); // Wait for timer to fire

xTimerDestroy(timer);

xTaskGroupDestroy(group);

return 0;

}

Poll Mode (Event Loop Integration)

#include <stdio.h>

#include <xbase/timer.h>

#include <xbase/time.h>

static void on_timeout(void *arg) {

int *count = (int *)arg;

printf("Timer #%d fired on caller thread\n", ++(*count));

}

int main(void) {

xTimer timer = xTimerCreate(NULL); // Poll mode

int count = 0;

// Schedule 3 timers

xTimerSubmitAfter(timer, on_timeout, &count, 100);

xTimerSubmitAfter(timer, on_timeout, &count, 200);

xTimerSubmitAfter(timer, on_timeout, &count, 300);

// Poll loop

uint64_t start = xMonoMs();

while (xMonoMs() - start < 500) {

int n = xTimerPoll(timer);

if (n > 0) printf(" Polled %d timer(s)\n", n);

usleep(10000); // 10ms

}

xTimerDestroy(timer);

return 0;

}

Use Cases

-

Event Loop Timer Backend — The event loop's builtin timers (

xEventLoopTimerAfter) use the same min-heap approach internally. Use standalonexTimerwhen you need timers independent of an event loop. -

Retry / Backoff Logic — Schedule retries with exponential backoff using

xTimerSubmitAfter(). Cancel pending retries withxTimerCancel()when a response arrives. -

Periodic Health Checks — In poll mode, integrate

xTimerPoll()into your main loop to execute periodic health checks without spawning additional threads.

Best Practices

- Choose the right mode. Use push mode when callbacks are independent and can run concurrently. Use poll mode when callbacks must run on a specific thread (e.g., the event loop thread) or when you want to avoid thread-switch latency.

- Don't use the handle after fire or cancel. Once a timer entry fires or is cancelled, the memory is freed. Accessing the handle is undefined behavior.

- Destroy before the task group. If using push mode, destroy the timer before destroying the task group to ensure all in-flight callbacks complete.

- Prefer

xEventLoopTimerAfter()when using an event loop. It avoids the overhead of a separate timer thread and integrates seamlessly with I/O dispatch.

Comparison with Other Libraries

| Feature | xbase timer.h | timerfd (Linux) | POSIX timer (timer_create) | libuv uv_timer |

|---|---|---|---|---|

| Platform | macOS + Linux | Linux only | POSIX (varies) | Cross-platform |

| Fire Mode | Push (thread pool) or Poll (MPSC) | fd-based (integrates with epoll) | Signal or thread | Event loop callback |

| Resolution | Millisecond (CLOCK_MONOTONIC) | Nanosecond | Nanosecond | Millisecond |

| Data Structure | Min-heap (O(log n)) | Kernel-managed | Kernel-managed | Min-heap |

| Thread Safety | Submit/Cancel are thread-safe | fd operations are thread-safe | Varies | Not thread-safe |

| Cancellation | O(log n) via heap index | timerfd_settime(0) | timer_delete() | uv_timer_stop() |

| Overhead | 1 background thread per xTimer | 1 fd per timer | 1 kernel timer per instance | Shared with event loop |

| Dependencies | heap.h, mpsc.h, task.h | Linux kernel | POSIX RT library | libuv |

Key Differentiator: xbase's timer provides a unique dual-mode design (push/poll) that lets you choose between concurrent execution and single-threaded polling without changing your callback code. The poll mode's lock-free MPSC queue makes it ideal for integration with custom event loops.

Benchmark

Environment: Apple Mac15,7 (12 cores), 36 GB RAM, macOS 26.x, Release build (

-O2). Each result is the median of 3 repetitions. Source:xbase/timer_bench.cpp

| Benchmark | N | Time (ns) | CPU (ns) | Throughput |

|---|---|---|---|---|

BM_Timer_SubmitCancel | — | 68.7 | 61.0 | — |

BM_Timer_SubmitBatch | 10 | 1,287 | 1,247 | 8.02 M items/s |

BM_Timer_SubmitBatch | 100 | 7,590 | 6,538 | 15.3 M items/s |

BM_Timer_SubmitBatch | 1,000 | 61,647 | 53,211 | 18.8 M items/s |

BM_Timer_FirePoll | 10 | 3,003 | 3,003 | 3.33 M items/s |

BM_Timer_FirePoll | 100 | 16,993 | 15,878 | 6.30 M items/s |

BM_Timer_FirePoll | 1,000 | 172,412 | 153,600 | 6.51 M items/s |

Key Observations:

- Submit+Cancel cycle takes ~61 ns CPU time, down from ~121 ns in the

calloc-based implementation. The improvement comes from swappingcalloc/freeforxSlabMt(see slab.md); the heap push + heap remove are unchanged. - Batch submit throughput scales from ~8 M to ~19 M items/s as batch size grows. Larger batches amortise the per-entry xSlabMt CAS across the heap-push dominated cost.

- Fire+Poll is slower than submit alone because it includes the MPSC queue transfer and callback invocation. At N=1,000 it sustains ~6.5 M timer fires/s.

task.h — N:M Task Model

Introduction

task.h provides a lightweight N:M concurrent task model where N user tasks are multiplexed onto M OS threads managed by a task group (thread pool). It supports lazy thread creation, configurable queue capacity, per-task result retrieval, and a global shared task group for convenience.

Design Philosophy

-

Lazy Thread Spawning — Worker threads are created on-demand when tasks are submitted and no idle thread is available, up to the configured maximum. This avoids pre-allocating threads that may never be used, reducing resource consumption for bursty workloads.

-

Simple Submit/Wait Model — Tasks are submitted with

xTaskSubmit()and optionally awaited withxTaskWait(). This mirrors the future/promise pattern found in higher-level languages, but in pure C with minimal overhead. -

Safe Cancellation —

xTaskCancel()uses a single CAS (compare-and-swap) to atomically transition a queued task to the cancelled state. If the task is still in the queue, the cancel succeeds and the caller can safely release the task's argument. If the task is already running or done, the cancel fails and the caller mustxTaskWait()first. -

Configurable Capacity — The task group can be configured with a maximum thread count and queue capacity. When the queue is full,

xTaskSubmit()returns NULL, giving the caller explicit backpressure. -

Global Shared Group —

xTaskGroupGlobal()provides a lazily-initialized, process-wide task group with default settings (unlimited threads, no queue cap). It's automatically destroyed atatexit(), making it convenient for fire-and-forget usage.

Architecture

graph TD

subgraph "Task Group"

QUEUE["Task Queue (FIFO)"]

W1["Worker Thread 1"]

W2["Worker Thread 2"]

WN["Worker Thread N"]

end

APP["Application"] -->|"xTaskSubmit()"| QUEUE

QUEUE -->|"dequeue"| W1

QUEUE -->|"dequeue"| W2

QUEUE -->|"dequeue"| WN

W1 -->|"done"| RESULT["xTaskWait() → result"]

W2 -->|"done"| RESULT

WN -->|"done"| RESULT

style APP fill:#4a90d9,color:#fff

style QUEUE fill:#f5a623,color:#fff

style RESULT fill:#50b86c,color:#fff

Implementation Details

Internal Structure

struct xTask_ {

xTaskFunc fn; // User function

void *arg; // User argument

xNote note; // 4-byte one-shot completion notification

void *result; // Return value of fn

struct xTaskGroup_ *group; // Back-pointer to owning group

struct xTask_ *next; // Intrusive queue linkage (task queue + TLS freelist)

xMpsc done_link; // Lock-free done-list linkage (xMpsc)

atomic_int state; // QUEUED → RUNNING/CANCELLED → DONE (CAS-based cancel)

};

// sizeof(xTask_) ≈ 48 bytes (down from ~136 bytes with mutex+cond)

struct xTaskGroup_ {

pthread_t *workers; // Dynamic array of worker threads

size_t max_threads; // Upper bound (SIZE_MAX if unlimited)

size_t nthreads; // Currently spawned threads

pthread_mutex_t qlock; // Protects the task queue

pthread_cond_t qcond; // Wakes idle workers

struct xTask_ *qhead, *qtail; // FIFO task queue

size_t qsize, qcap; // Current size and capacity

xMpsc *done_head; // Lock-free MPSC done queue (head)

xMpsc *done_tail; // Lock-free MPSC done queue (tail)

size_t idle; // Number of idle workers

atomic_size_t pending; // Submitted - finished

atomic_size_t done_count; // Tasks completed

pthread_cond_t wcond; // Dedicated cond for xTaskGroupWait()

bool shutdown; // Shutdown flag

};

TLS Freelist

In the common event-loop offload path, xTaskSubmit() (alloc) and xTaskWait() (free) happen on the same thread. A per-thread freelist eliminates malloc/free overhead entirely — zero locks, zero atomics. The task->next pointer is reused as the freelist link (zero extra memory). A per-thread cap of 64 prevents unbounded caching.

static __thread struct {

struct xTask_ *head;

size_t count;

} tl_free = {NULL, 0};

Worker Loop

Each worker thread runs worker_loop():

- Acquire lock and increment

idlecount. - Wait on

qcondwhile the queue is empty and not shutting down. - Dequeue one task, decrement

idle. - CAS state QUEUED → RUNNING — if the CAS fails (task was cancelled), skip execution.

- Execute

task->fn(task->arg)(only if step 4 succeeded). - Push to done queue via

xMpscPush()(lock-free, wait-free for producers). - Signal completion via

xNoteSignal()(atomic store + kernel wake). - Update counters — decrement

pending, signalwcondif all tasks are done.

Task Submission Flow

flowchart TD

SUBMIT["xTaskSubmit(group, fn, arg)"]

CHECK_CAP{"Queue full?"}

ENQUEUE["Enqueue task"]

CHECK_IDLE{"Idle workers > 0?"}

SIGNAL["Signal qcond"]

CHECK_MAX{"nthreads < max?"}

SPAWN["Spawn new worker"]

DONE["Return task handle"]

FAIL["Return NULL"]

SUBMIT --> CHECK_CAP

CHECK_CAP -->|Yes| FAIL

CHECK_CAP -->|No| ENQUEUE

ENQUEUE --> CHECK_IDLE

CHECK_IDLE -->|Yes| SIGNAL

CHECK_IDLE -->|No| CHECK_MAX

CHECK_MAX -->|Yes| SPAWN

CHECK_MAX -->|No| DONE

SPAWN --> SIGNAL

SIGNAL --> DONE

style SUBMIT fill:#4a90d9,color:#fff

style FAIL fill:#e74c3c,color:#fff

style DONE fill:#50b86c,color:#fff

Separate Wait Conditions

The implementation uses two separate condition variables:

qcond— Wakes idle workers when a new task arrives.wcond— WakesxTaskGroupWait()callers when all tasks complete.

Using a single condition variable caused lost wakeups: pthread_cond_signal() could wake an idle worker instead of the GroupWait caller, leaving it blocked forever.

Global Task Group

xTaskGroupGlobal() uses pthread_once for thread-safe lazy initialization. The group is registered with atexit() for automatic cleanup. It uses default configuration (unlimited threads, no queue cap).

API Reference

Types

| Type | Description |

|---|---|

xTaskFunc | void *(*)(void *arg) — Task function signature. Returns a result pointer. |

xTask | Opaque handle to a submitted task |

xTaskGroup | Opaque handle to a task group (thread pool) |

xTaskGroupConf | Configuration struct: nthreads (0 = auto), queue_cap (0 = unbounded) |

Functions

| Function | Signature | Description | Thread Safety |

|---|---|---|---|

xTaskGroupCreate | xTaskGroup xTaskGroupCreate(const xTaskGroupConf *conf) | Create a task group. NULL conf = defaults. | Not thread-safe |

xTaskGroupDestroy | void xTaskGroupDestroy(xTaskGroup g) | Wait for pending tasks, then destroy. | Not thread-safe |

xTaskSubmit | xTask xTaskSubmit(xTaskGroup g, xTaskFunc fn, void *arg) | Submit a task. Returns NULL if queue is full. | Thread-safe |

xTaskWait | xErrno xTaskWait(xTask t, void **result) | Block until task completes. Returns xErrno_Cancelled if the task was cancelled. | Thread-safe |

xTaskCancel | xErrno xTaskCancel(xTask t) | Cancel a queued task. Returns xErrno_Ok on success, xErrno_Busy if already running/done. | Thread-safe |

xTaskGroupWait | xErrno xTaskGroupWait(xTaskGroup g) | Block until all pending tasks complete. | Thread-safe |

xTaskGroupThreads | size_t xTaskGroupThreads(xTaskGroup g) | Return number of spawned worker threads. | Thread-safe (atomic read) |

xTaskGroupPending | size_t xTaskGroupPending(xTaskGroup g) | Return number of pending tasks. | Thread-safe (atomic read) |

xTaskGroupGlobal | xTaskGroup xTaskGroupGlobal(void) | Get the global shared task group (lazy init). | Thread-safe |

Usage Examples

Basic Task Submission

#include <stdio.h>

#include <xbase/task.h>

static void *compute(void *arg) {

int *val = (int *)arg;

*val *= 2;

return val;

}

int main(void) {

xTaskGroup group = xTaskGroupCreate(NULL);

int value = 21;

xTask task = xTaskSubmit(group, compute, &value);

void *result;

xTaskWait(task, &result);

printf("Result: %d\n", *(int *)result); // 42

xTaskGroupDestroy(group);

return 0;

}

Parallel Map

#include <stdio.h>

#include <xbase/task.h>

#define N 8

static void *square(void *arg) {

int *val = (int *)arg;

*val = (*val) * (*val);

return val;

}

int main(void) {

xTaskGroupConf conf = { .nthreads = 4, .queue_cap = 0 };

xTaskGroup group = xTaskGroupCreate(&conf);

int data[N] = {1, 2, 3, 4, 5, 6, 7, 8};

xTask tasks[N];

for (int i = 0; i < N; i++)

tasks[i] = xTaskSubmit(group, square, &data[i]);

// Wait for all

xTaskGroupWait(group);

for (int i = 0; i < N; i++)

printf("data[%d] = %d\n", i, data[i]);

// Clean up task handles

for (int i = 0; i < N; i++)

xTaskWait(tasks[i], NULL);

xTaskGroupDestroy(group);

return 0;

}

Cancelling a Task

#include <stdio.h>

#include <stdlib.h>

#include <xbase/task.h>

static void *process(void *arg) {

int *data = (int *)arg;

printf("Processing: %d\n", *data);

return NULL;

}

int main(void) {

xTaskGroup group = xTaskGroupCreate(NULL);

int *data = (int *)malloc(sizeof(int));

*data = 42;

xTask task = xTaskSubmit(group, process, data);

// Try to cancel — if successful, we can safely free data now.

if (xTaskCancel(task) == xErrno_Ok) {

free(data); // Safe: fn was never called

} else {

// Task is already running — must wait before freeing

xTaskWait(task, NULL);

free(data);

}

xTaskGroupDestroy(group);

return 0;

}

Using the Global Task Group

#include <stdio.h>

#include <xbase/task.h>

static void *work(void *arg) {

printf("Running on global pool: %s\n", (char *)arg);

return NULL;

}

int main(void) {

xTask t = xTaskSubmit(xTaskGroupGlobal(), work, "hello");

xTaskWait(t, NULL);

// No need to destroy the global group

return 0;

}

Use Cases

-

CPU-Bound Parallel Processing — Distribute computation across multiple cores. Use

xTaskGroupWait()to synchronize at barriers. -

Event Loop Offload — The event loop's

xEventLoopSubmit()usesxTaskGroupinternally to run work functions on worker threads, then delivers results back to the loop thread. -

Background I/O — Offload blocking file I/O (e.g.,

fsync, large reads) to a thread pool to keep the main thread responsive.

Best Practices

- Always call

xTaskWait()or letxTaskGroupDestroy()clean up. EachxTaskSubmit()allocates a task struct (from the TLS freelist or malloc). Task memory is reclaimed when the done queue is drained (duringxTaskGroupWait()orxTaskGroupDestroy()). Leaking task handles leaks resources. - Check

xTaskCancel()return value before releasing the arg.xErrno_Okmeans the task will not execute — safe to free.xErrno_Busymeans it's already running or done — you mustxTaskWait()first. - Set

queue_capfor backpressure. Without a cap, unbounded submission can exhaust memory. A bounded queue lets you detect overload via NULL returns fromxTaskSubmit(). - Don't destroy the global group.

xTaskGroupGlobal()is managed internally and destroyed atatexit(). Passing it toxTaskGroupDestroy()is undefined behavior. - Use

xTaskGroupWait()for barriers, not busy-polling. It uses a dedicated condition variable and blocks efficiently.

Comparison with Other Libraries

| Feature | xbase task.h | pthread | C11 threads | GCD (libdispatch) |

|---|---|---|---|---|

| Abstraction | Task (submit/wait) | Thread (create/join) | Thread (create/join) | Block (dispatch_async) |

| Thread Management | Automatic (lazy spawn) | Manual | Manual | Automatic |

| Queue | Built-in FIFO with cap | N/A | N/A | Built-in (serial/concurrent) |

| Result Retrieval | xTaskWait(t, &result) | pthread_join(t, &result) | thrd_join(t, &result) | Completion handler |

| Group Wait | xTaskGroupWait() | Manual barrier | Manual barrier | dispatch_group_wait() |

| Backpressure | queue_cap → NULL on full | N/A | N/A | N/A (unbounded) |

| Global Pool | xTaskGroupGlobal() | N/A | N/A | dispatch_get_global_queue() |

| Platform | macOS + Linux | POSIX | C11 | macOS + Linux (via libdispatch) |

| Dependencies | pthread | OS | OS | OS / libdispatch |

Key Differentiator: xbase's task model provides a simple, portable thread pool with lazy spawning and explicit backpressure — features that require significant boilerplate with raw pthreads. Unlike GCD, it gives you direct control over thread count and queue capacity.

memory.h — Reference-Counted Memory Management

Introduction

memory.h provides a vtable-driven, reference-counted memory management system for C. It enables object lifecycle management (construction, destruction, retain, release, copy, move) through a virtual table pattern, bringing RAII-like semantics to pure C. The XMALLOC(T) macro allocates an object with an embedded header that tracks the reference count and vtable pointer.

Design Philosophy

-

vtable-Driven Lifecycle — Each object type defines a static

xVTablewith optional function pointers forctor,dtor,retain,release,copy, andmove. This decouples lifecycle logic from the allocation mechanism, similar to C++ virtual destructors or Objective-C's class methods. -

Hidden Header Pattern — A

Headerstruct is prepended to every allocation, storing the type name (for debugging), size, reference count, and vtable pointer. The user receives a pointer past the header, so the header is invisible to normal usage. -

Atomic Reference Counting —

xRetain()andxRelease()use atomic operations (__ATOMIC_SEQ_CST) to safely manage reference counts across threads. When the count reaches zero, the destructor is called and memory is freed. -

Macro Convenience —

XMALLOC(T)andXMALLOCEX(T, sz)macros generate the correctxAlloc()call with the type name string, size, and vtable pointer, reducing boilerplate.

Architecture

graph TD

MACRO["XMALLOC(T) / XMALLOCEX(T, sz)"]

ALLOC["xAlloc(name, size, count, vtab)"]

HEADER["Header + Object"]

RETAIN["xRetain(ptr)<br/>atomic refs++"]

RELEASE["xRelease(ptr)<br/>atomic refs--"]

FREE["xFree(ptr)<br/>dtor + free"]

COPY["xCopy(ptr, other)"]

MOVE["xMove(ptr, other)"]

MACRO --> ALLOC

ALLOC --> HEADER

HEADER --> RETAIN

HEADER --> RELEASE

RELEASE -->|"refs == 0"| FREE

HEADER --> COPY

HEADER --> MOVE

style MACRO fill:#4a90d9,color:#fff

style RELEASE fill:#e74c3c,color:#fff

style FREE fill:#e74c3c,color:#fff

Implementation Details

Memory Layout

graph LR

subgraph "malloc'd block"

HDR["Header<br/>name | size | refs | vtab"]

OBJ["User Object<br/>(sizeof(T) bytes)"]

EXTRA["Extra bytes<br/>(XMALLOCEX only)"]

end

PTR["xAlloc() returns →"] --> OBJ

style HDR fill:#f5a623,color:#fff

style OBJ fill:#4a90d9,color:#fff

style EXTRA fill:#50b86c,color:#fff

The actual memory layout:

┌──────────────────────────────────────────────────────┐

│ Header (hidden) │

│ const char *name — type name string (e.g. "Foo") │

│ size_t size — sizeof(T) │

│ size_t refs — reference count (starts at 1) │

│ xVTable *vtab — pointer to static vtable │

├──────────────────────────────────────────────────────┤

│ User Object (returned pointer) │

│ T fields... │

│ [optional extra bytes from XMALLOCEX] │

└──────────────────────────────────────────────────────┘

XMALLOC / XMALLOCEX Macro Expansion

// Given:

typedef struct Foo Foo;

struct Foo { int x; char buf[]; };

XDEF_VTABLE(Foo) { .ctor = FooCtor, .dtor = FooDtor };

XDEF_CTOR(Foo) { self->x = 0; }

XDEF_DTOR(Foo) { /* cleanup */ }

// XMALLOC(Foo) expands to:

(Foo *)xAlloc("Foo", sizeof(Foo), 1, &FooVTable)

// XMALLOCEX(Foo, 128) expands to:

(Foo *)xAlloc("Foo", sizeof(Foo) + 128, 1, &FooVTable)

Reference Count Lifecycle

sequenceDiagram

participant App

participant Alloc as xAlloc

participant Header

participant VTable

App->>Alloc: XMALLOC(Foo)

Alloc->>Header: malloc(sizeof(Header) + sizeof(Foo))

Alloc->>Header: refs = 1

Alloc->>VTable: vtab->ctor(ptr)

Alloc-->>App: Foo *ptr

App->>Header: xRetain(ptr) → refs = 2

App->>Header: xRelease(ptr) → refs = 1

App->>Header: xRelease(ptr) → refs = 0

Header->>VTable: vtab->release(ptr)

Header->>VTable: vtab->dtor(ptr)

Header->>Header: free(hdr)

Thread Safety

xRetain()andxRelease()are thread-safe — they usexAtomicAdd/xAtomicSubwith sequential consistency ordering.xAlloc(),xFree(),xCopy(), andxMove()are not thread-safe — they should be called from a single owner or with external synchronization.

API Reference

Macros

| Macro | Expansion | Description |

|---|---|---|

XDEF_VTABLE(T) | static xVTable TVTable = | Define a static vtable for type T |

XDEF_CTOR(T) | static void TCtor(T *self) | Define a constructor for type T |

XDEF_DTOR(T) | static void TDtor(T *self) | Define a destructor for type T |

XMALLOC(T) | (T *)xAlloc("T", sizeof(T), 1, &TVTable) | Allocate one T with vtable |

XMALLOCEX(T, sz) | (T *)xAlloc("T", sizeof(T) + sz, 1, &TVTable) | Allocate T + extra bytes |

Types

| Type | Description |

|---|---|